The A.I. business influencer guys must be stopped

Please let's stop predicting the future of A.I.

One of the handful of things Twitter still has going for it is that it’s the main place people are talking about A.I. It’s obviously not the only place--there’s lots of activity on the homepage of the rationalist-terrorism cell LessWrong, and I’m sure there are many dozen Discord chat rooms of A.I. shitposters and perverts trying to circumvent various guardrails in a quest to make images of “Marge Simpson, 4k, photorealistic, giantess, bare feet, crush like bug, low angle, sexy”--but Twitter is still the central hub for interaction between A.I. researchers and civilians of various stripes. This makes it a pretty essential, and, frankly, sometimes even educational place to hang out and read if you want to follow what’s happening in an attention-grabbing, investment-attracting technological sector, like, say, if you are obligated to produce at least one (1) tech-culture column for your email subscription newsletter every week.

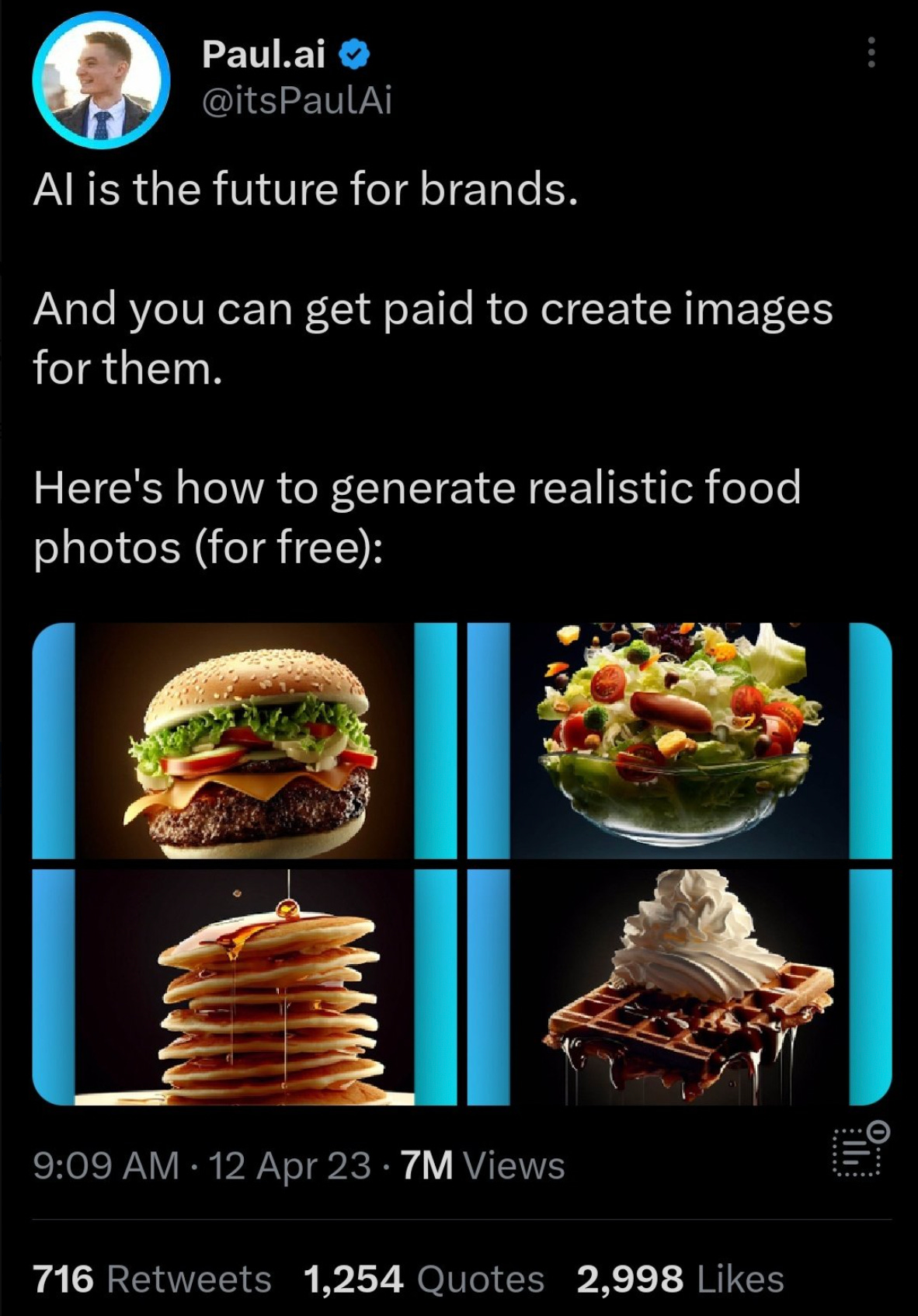

Unfortunately for the sake of general knowledge this means that many of the most public discussions and disputes around A.I. are warped to the structures and prerogatives of the Twitter platform, and not just to “Twitter” in some general sense but in the specific sense of The Elon Musk Twitter Platform of 2023 A.D., with its new focus on paying client-subscribers whose posts are given special weight in the newly prominent “For You” feed. My sense is that Twitter’s new payola structure, combined with Elon Musk’s general role in the cultural firmament as as the Most Epic Business Hustler, has tilted the makeup of the algorithmically sorted Twitter feed toward a particularly terrible kind of bullet-point-happy Business-Hacks A.I. Influencer thread-mongers. I mean guys like this, basically:

“ItsPaulAI,” who seems to run a bare-bones “AI customer service” business called Answera, spends an awful lot of his time tweeting long, single-line paragraph threads on topics such as “More than 300 new AI products were launched this week [pointless line break] Here are the most trending AI tools.” or “How to use ChatGPT to earn $3000/month. [pointless line break] And it’s not difficult at all. Here are 5 ways to do it.” He isn’t even a particularly noteworthy version of this; he just happened to bubble up this weekend because of the tweet above, which escaped containment and became a viral sensation after a number of people pointed out that generating “realistic food photos” for brands had a high likelihood of violating F.T.C. regulations about misleading food advertising. ItsPaulAI deleted simply the thread and moved on to greener pastures such as “Most people don't know they can use ChatGPT on their phone [pointless line break] And it increases your productivity a lot [pointless line break] Here're 5 ways to access ChatGPT on your phone.”

Basically any time I go to the “For You” page attached to my newsletter Twitter account I’m served up another one of these guys; here’s the one that popped up when I went to check as I writing this.

It’s not that I don’t think these threads can potentially be accurate or even enlightening (though it’s rare). But it’s very hard to read them (let alone trust them) when the all share a particular, terrible tone--a “LinkedIn But Somehow Worse” style apparently designed for people whose MBAs gave them some kind of learning disorder:

If you are not sure if you have encountered an A.I. Business Influencer thread, there are a few things you can check. Is it written in single-sentence paragraph form? Does at least one tweet contain the phrase “Here’s what you should know”? Does it end with a tout for the tweeter’s newsletter?

There are other versions of this influencer patter elsewhere--on YouTube, as James Vincent and John Herrman have documented, and TikTok, where this one woman is encouraging unpublished authors to “submit” entire manuscripts to ChatGPT to get a “legitimately objective assessment” of their writing. Obviously the pernicious influence of LinkedIn, Tim Ferriss, and other 21st-century Business Hustle Culture are nothing particularly new, and all social networks are chock-full of would-be gurus and influencers across all fields, spewing threaded “five things you need to know” listicles. (One of the earliest signs of doom for Clubhouse, the audio social app that was briefly hot over the course of the pandemic, was how quickly it was overtaken by MLM Hustle Grindset guys.) I just passed one thread offering five tips for hitting the high fastball; and I have to admit that one of my examples above is more accurately characterized as a lawyer business-influencer type who has happened to glom onto A.I. as a potentially viral subject.

But it’s hard not notice that “A.I.” as a discursive subject is dominated, more than most other tech-hype hyperobjects, by the threaded musings of Influencers whose main claim to expertise is … social-media charisma? Marketing skills? Keyboard macros for the “🧵” emoji? What they share across platforms and fields is less any particular insight and more a deep and abiding faith in the accuracy and power of a still-developing technology about which not much is known (and where what little is known is often kept secret by the private companies developing it). And yet these A.I. Influencers confidently parse new research, show off impressive uses of A.I., make dramatic predictions, etc., and otherwise shape the perception of A.I. for Twitter’s narrow but extremely influential audience of journalists, investors, tech CEOs, etc.

Not all of these influencers are as obviously goony as the LinkedIn bullet-point hustle guys, but they’re all engaged in more or less the same practice, and starting more or less from the same priors. There’s a whole community of weirdos peddling a large language model mysticism who like to pose as cool shitposters, but are unmistakably A.I. Influencers with a better grasp of the jargon of the field and the codes of power-user Twitter. (They call themselves “postrats” or “TCOT.”) One or two steps up the prestige ladder from the Hustle Guys--but still sharing certain precepts, rhetorical styles, and audiences with them--are a set of A.I. prognosticators, usually tech investors by trade, who manage to be experts in not only large language models but also labor markets, political economy, media studies, and philosophy of mind.

But what the hell do these guys know? A few weeks ago Paul Krugman wrote a newsletter column with the headline “A.I. May Change Everything, but Probably Not Too Quickly.” From my perspective this seemed like a reasonable hedged assessment, but of course A.I. Influencers responded by recalling (and mocking) Krugman’s infamous 1995 judgment of the internet: “By 2005, it will become clear that the Internet’s impact on the economy has been no greater than the fax machine’s."

This quote comes up every time Krugman expresses skepticism of something (most often cryptocurrency), but this position is, in fact, up for debate. There’s plenty of evidence that Krugman was right that economics-Twitter guys love to bring up: Many measures of the economy, productivity in particular, have not seen much meaningful long-term change as a result of the widespread connectivity. On the other hand, well, we’ve all lived through the last few decades. How can anyone possibly say that the internet’s impact on the economy has been no greater than the fax machine’s?

But the fact that the debate is ongoing 30 years later is probably more revealing for the our purposes than any settlement in one direction or the other. Surely in 1995, the people crowing now about the internet’s plain effects on politics, culture, and social worlds would have also expected it to supercharge economic productivity? It’s worth wondering why that didn’t happen, especially as we try to assess what kinds of transformations A.I. might bring. Sometimes what seem like obvious outcomes never come to pass, while the real futures emerge unexpectedly and accidentally.

Of course, there’s not much alpha to being the Twitter influencer whose take on things is mostly “Not Sure” and “I Don’t Know,” especially when you’re competing with pay-to-play guys who write better marketing copy. It would just be less frustrating if we hadn’t seen this same story play out at different scales dozens of times over the last two decades.

shout out to calacanis, consistently one of the funniest people on twitter while being completely serious. what can i say, the dude just has a comedic gift

Wait, they're actually calling themselves TCOT? Insert trite comment about "first as tragedy..." or "time is a flat circle" here. Are the AI guys going to start getting into Favstar?